Insights

Model Fingerprint: A general and efficient framework for AI/ML interpretability

Interpreting complex machine learning models requires moving beyond feature rankings toward an understanding of the structures that drive prediction.

March 2026

In this paper, we propose an innovative model interpretability framework called “Model Fingerprint.” It is a bottom‑up approach to explaining machine learning models that shifts the focus from assigning feature importance to uncovering the logical structure that drives predictions.

While attribution methods such as SHapley Additive exPlanations (SHAP), faithfully quantify how important each feature is, filtering by importance alone is a limited set of lens – much like trying to understand a movie by listing how significant each character is without considering their interactions, pivotal moments, or how the plot unfolds. Model Fingerprint identifies sets of interacting components that make a model’s behavior intelligible and produces low‑order approximations that are compact, coherent, and extensible. Fully consistent with SHAP in the limit, it reframes interpretability by connecting attribution to logic, approximation to insight, and convergence to rigor.

Key highlights

As artificial intelligence (AI) and machine learning become deeply integrated into decision-making processes, the need to interpret complex “black box” models has become increasingly critical. In this paper, we elaborate on our “Model Fingerprint” approach to model explanation and show how it shifts the focus from assigning feature importance to truly understanding the logical structure of a model.

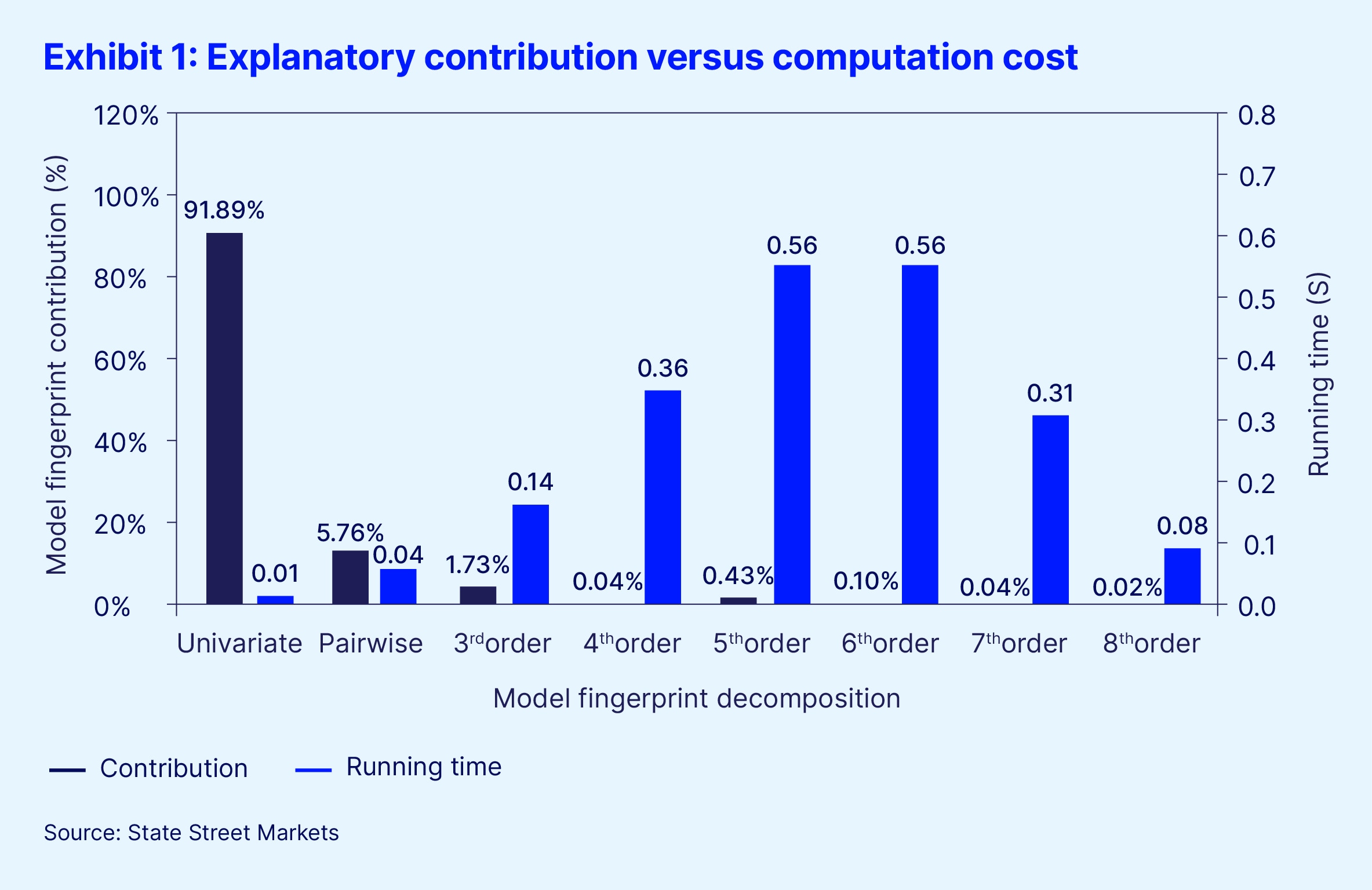

SHAP-style methods faithfully attribute feature importance for a model, and Model Fingerprint is fully consistent with them. In the limit, the cumulative contributions identified by Model Fingerprint converge to SHAP attributions. The distinction lies in how explanation is constructed. Rather than starting with a top-down score, Model Fingerprint builds explanations from the bottom up, identifying the smallest sets of interacting components that already reveal coherent model logics. This naturally yields low-order approximations (LOA): compact descriptions that capture what truly drives decisions, while remaining extensible rather than reductive.

Model Fingerprint reframes what it means to understand a machine learning model. It advances the science of interpretability from measuring importance toward explaining reasoning – an essential step for models we must not just use, but understand.